AI in education more hype than help?

There was a lot of fuss over AI at the huge EdTech show in London but how much of it amounted to gimmickry?

“What really stood out to me was that while these systems are very complex, they’re often based on simple ideas and are not always appropriate for education,” said UNSW PhD student and researcher Kevin Witzenberger who attended the show.

One AI program he saw gave the teacher a read-out of students’ emotions in the classroom based on facial recognition technology.

“But what value does that really add? I am critical of a machine that says a student is 67.8% happy.”

“We should not see these numbers as complete truths when they come from a machine. We should instead be asking what is the value in something like an emotional score, because aren’t teachers better placed to deal with the emotional wellbeing of their students than a camera paired with an algorithm?” Witzenberger said.

Witzenberger wants to see a greater understanding of data and greater control over how it is used, using the occasion of his PhD titled The Impact of Artificial Intelligence on Education Policy to look into the area.

The Scientia Scholarship student from UNSW Arts & Social Sciences is interested in automated roll calls via facial recognition, online student verification through keystroke analysis and student dropout or success predictions.

Another area of concern for AI in education, Witzenberger says, is the potential for bias in automated systems.

“Machine learning is the part of AI that learns to make its own rules and decisions in a better way, or to make a prediction,” he says.

“So, if I want to identify faces in the classroom, then I’d have to feed it a lot of faces that I choose first which will then determine the way the system identifies people.”

Sadly, this can often be discriminatory, when Amazon used an AI algorithm in their recruiting process it later discovered it screened-out female applicants.

“They tried to build an AI that determined employability based on the CVs people sent in. But the definition of employability was built around only the previously successful applicants,” he says.

And because they had hired more men in the past, it became a very sexist form of screening.

“Looking at these issues shows us the problems we need to avoid in education. Therefore, the development of these technologies also needs to be monitored,” he says.

We don’t want a system that steers female students away from STEM subjects just because there have been more male enrolments in the past.

“I'm not always trying to look at just the negative side of AI, but it's just that there’s so much of it.”

For the project part of his PhD, Witzenberger intends to develop a prototype that would give students more control over the way their data is analysed, which is otherwise often concealed in a black box.

He says commercial organisations tend to hide how decisions are made behind a black box of intellectual property, which means users don’t really know how their data is being used.

“And the owners of these black-boxes do not always want the user to know what data they’re actually using,” he says. “It’s all supposed to operate a little bit under your consciousness.”

Mr Witzenberger says he wants to build a prototype with students that is very open, consensual and not based on obsessive features and invasiveness.

“AI as we know it in education is deeply embedded into the context of powerful technology companies such as Google, Microsoft and Amazon,” he says.

For this reason, his research will focus on rethinking “some of the recent trends around the datafication and digitalisation of education by giving the power back to the user.”

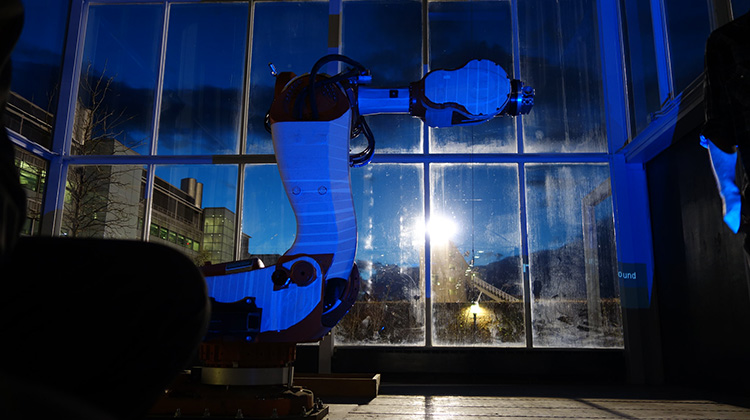

Image Machine Yearning by Gene Kogan under flicr cc attribution license